Thu Mar 30, 2023

The great AI Gold Rush has begun.

Given the constant hype, it’s tempting to dismiss the constant flow of Twitter posts on “Ten ChatGPT prompts that will blow your mind 🤯”, the thousands of shoddy AI start-ups flooding the app store, and the usual assortment of blustering, opportunistic idiots who have scratched “AR” or “NFTs” out of their bios to replace it with “AI all the time, every time”.

But, there are actual concrete products - not just vapourware - emerging out of the hype.

Microsoft, for example, is aggressively embedding AI into all their core Office products with a supercharged successor to Clippy they call “Co-Pilot”. They promise to eliminate the drudgery of office work. They’re probably not wrong (although they may also eliminate working-class, admin-focused office worker jobs at the same time).

Numerous other high-profile companies, from Duolingo to Expedia, are integrating ChatGPT plugins into their products, bringing AI to the mainstream.

In a rare display of enthusiasm, even Bill Gates has declared that “The Age of AI has begun” (the title of his latest blog post). But, when discussing the risks and problems with AI, he says this:

Then there’s the possibility that AIs will run out of control. Could a machine decide that humans are a threat, conclude that its interests are different from ours, or simply stop caring about us? Possibly, but this problem is no more urgent today than it was before the AI developments of the past few months.

Wait, what?

Shouldn’t this “problem” be a whole lot more urgent today? When Bill Gates declared that the Age of AI has begun, he wasn’t being hyperbolic or bombastic. I believe he is right - the world is about to get crazy. But, given the rapid, exponential pace of AI development, is he right to dismiss the threat of AI malevolence so casually?

To be sure, there are a lot of intelligent people out there who are downplaying the hype. Currently, all the AI bots out there have a tendency to “hallucinate” and present made-up nonsense as facts. There is a sense that we’re being duped by the oldest parlour trick in the book of snake-oil salesmen, a trick that amounts to no more than smoke and mirrors.

Neil Gaiman rightly points out that “ChatGPT doesn’t give you information. It gives you information-shaped sentences.”

The Guardian, not mincing words, published an article entitled “The stupidity of AI”, in which they warn against the notion that these bots are actually intelligent when they are simply based on the “wholesale appropriation of existing culture”. This warning does beg the question: When all human “content creators” are phased out in favour of generative AI, what happens when no more original human data is being created?

Will AI become recursive, cannibalizing itself, or will it learn to evolve beyond the constraints of its training data? Will anybody bother to learn how to write well anymore? It seems obvious to all that the days of formulaic writing are over, perhaps only the best of us will continue to pursue writing as an art form.

Eminent Anthropologist Noam Chomsky, who at 94 years old is still as sharp as barbed wire, wrote a piece for the New York Times entitled “The False Promise of ChatGPT”. In his piece, he dismays at the time and attention we’re giving to something that is no more than a pale imitation of the incredible human mind. He says:

The human mind is not, like ChatGPT and its ilk, a lumbering statistical engine for pattern matching, gorging on hundreds of terabytes of data and extrapolating the most likely conversational response or most probable answer to a scientific question. On the contrary, the human mind is a surprisingly efficient and even elegant system that operates with small amounts of information; it seeks not to infer brute correlations among data points but to create explanations.

Well, when you put it that way… it does seem silly to make all this fuss over something that doesn’t even have a soul.

But, what will happen when, instead of asking an AI to parse the entire internet - where it is all but impossible to sort out fact from fiction - we give it a corporeal presence? I’d be far more wary of a self-learning superintelligence housed in an autonomous body of sorts, with the senses of sight, touch, and hearing provided by enhanced, network-connected sensors.

I wonder if anyone at OpenAI has given Boston Dynamics a call? I wouldn’t be surprised. Brace yourselves.

Thankfully, we aren’t quite there yet, although, that reality may be coming sooner than we think. For now, the question all burnt-out white-collar workers are mulling over is this: Will AI give us more free time to pursue creative pursuits, or simply increase the lineups at the EI office?

The idea of automated productivity freeing us so that we can work less is an old one. Back in 1930, economist John Maynard Keynes predicted that labour-saving technologies would give us a fifteen-hour workweek.

Obviously, that didn’t happen. And, even though the idea of a four-day workweek has gained traction recently, this flawed concept misses the point entirely: “Three-quarters of workers said they would put in four 10-hour days in exchange for an extra day off a week”. That’s still a 40-hour workweek folks - do the math.

I could scribble furiously for several more pages on why we have a forty-hour workweek or why we have to pay taxes, or why any number of “temporary” solutions from the Industrial Age never went away, but I want to keep my hair.

Anthropologist David Graeber even goes so far as to posit that, in order to “maintain the power of finance capital”, the ruling class have made up a bunch of pointless jobs just to keep us all working. He calls this the Phenomenon of Bullshit Jobs.

If his theory holds true, then no amount of AI assistance will hasten us to a utopia where we’re all living off universal basic income. Instead, new jobs will be created in the future that we haven’t even thought of yet. AI Manager? Neural ChatGPT Interface Developer? His Excellency, the Human Ambassador to the AI Metaverse?

Hitting close to home, I’ve already seen a Technical Writer job posting asking for experience “leveraging AI to expedite your editing/content writing with tools such as ChatGPT”.

Yikes. After reading this, I scrambled to ask Bing AI (which uses ChatGPT 4) to edit a few paragraphs that I had recently edited. Comparing our two edits, it was clear that mine was far superior. So, not very helpful…yet. However, I have a feeling that anybody with their head in the sand is going to get steamrolled unless they start figuring out how AI is going to further their career instead of replace it.

So, I’ve been tinkering… but am ambivalent right now. I’m starting to find AI surprisingly useful while feeling increasingly uneasy at the rapid pace of AI development.

Eight years ago, deep thinker and author Tim Urban saw this coming and published a mega-post on the AI Revolution and the road to superintelligence. His post made a big impression on me when I read it back in 2015, and I wrote a think piece about how VR and AI could intersect to kickstart The Matrix.

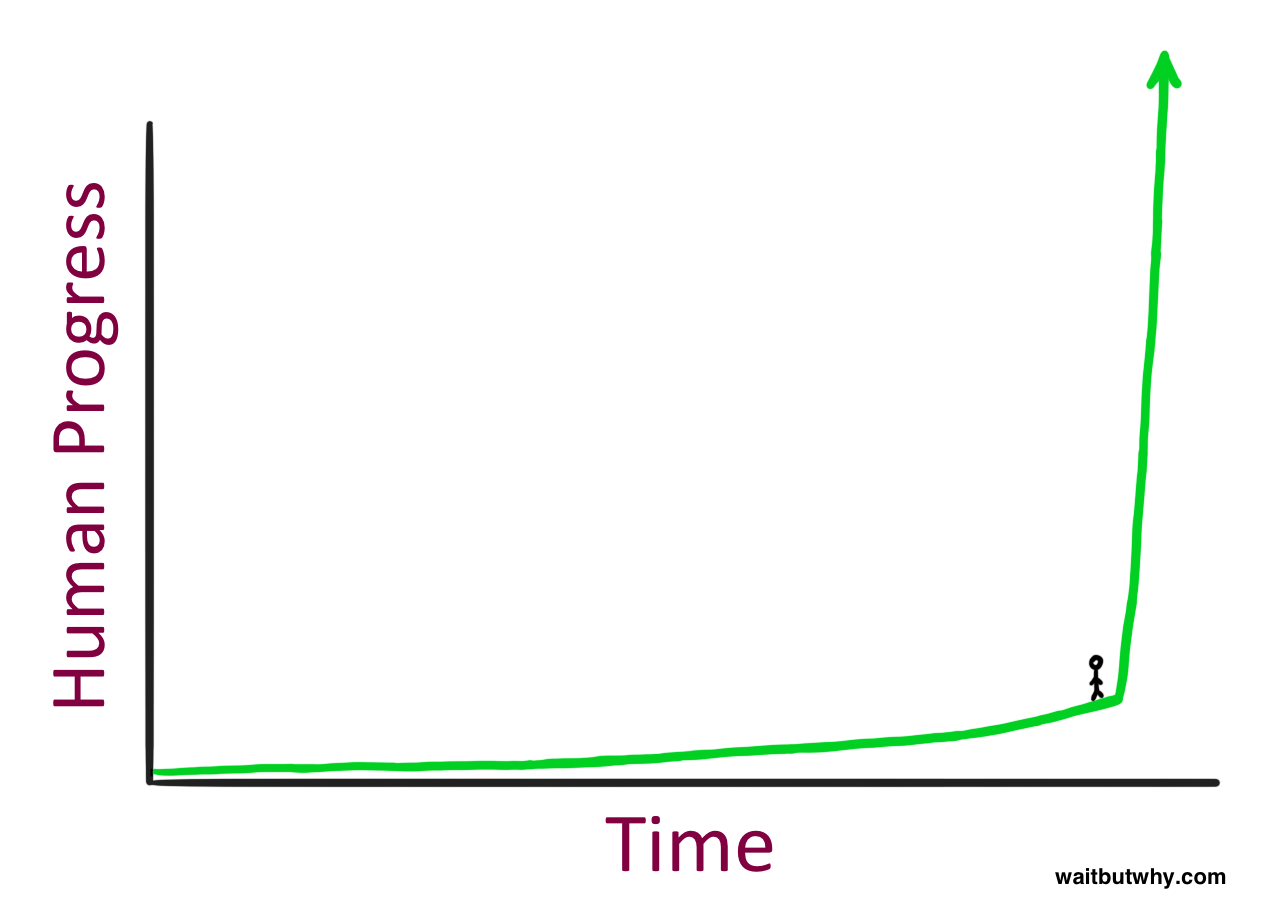

Anyway, he created this graph to show how exponential progress can take us by surprise:

Although it is impossible without hindsight to say where we are standing on this graph, it does seem that a lot of people are surprised. Ezra Klein calls this collective surprise “the difficulty of living in exponential time”, where the pace of improvement in the current crop of AI products is vastly outstripping the ability of society to react and respond to it.

Using the parlance of AI research (you’ll need to read Tim Urban’s piece to catch up), we haven’t yet achieved an “Artificial General Intelligence” (AGI). To recap, an AGI is the next level up from an “Artificial Narrow Intelligence” (ANI). Voice assistants, such as Siri or Alexa, fall into the ANI category. But an AGI would equal human intelligence across the board. Experts predict an AGI emerging around the year 2040, about twenty years from now.

Honestly, at this point, it seems like we could get an AGI sooner than that. I hope I’m wrong, because we need some breathing room to recover from the AI whiplash.

A growing group of tech pioneers, including Elon Musk and Steve Wozniak, are calling for just that. They have signed an open letter saying the AI race is dangerous, and we need to pump the brakes before the human race gets hurt.

But, did the greedy fortune hunters of the 18th-century Gold Rush heed warnings not to undertake the dangerous route to California where they would almost certainly die of starvation or cholera on the treacherous Oregon Trail? No, of course not - the allure was too great. I’m sure this strongly-worded online petition will have even less impact.

However, a decade from now, when we’re using the unbridled, creative power of generative AI with glee (Hey VideoGPT, make me a movie starring Sean Connery killing Nazis in an X-wing!), the student will surpass the master. The machine will become smarter than us, much faster than we thought possible, bringing the fun and games to a grinding halt. Then we’ll all be asking the question Tim Urban posed at the start of the 21st century:

Will it be a nice god?